10 Real-Life Examples and Applications of AI

Here are 10 real-life examples and applications of AI. Let's get started.

What is Artificial Intelligence?

Artificial Intelligence is a computing technology that helps computers to learn. The objective of this technology is to enable a computer to accomplish human-like activities.

Real-Life Example and Applications of AI

What are some examples of AI? Today, we use AI several times a day without even realizing it. AI helps us in our daily lives, at work, in communication, while traveling, shopping, driving, and more. The list can continue on and on:

Below, we've described applications in detail so you can see how AI impacts our everyday life.

1. Virtual Personal Assistants

In recent years, there has been a surge in the development and implementation of virtual personal assistants. These take many forms and leverage various technologies such as voice recognition, text analysis, and some even make decisions for you, such as automatically scheduling meetings based on incoming emails! Let‘s explore some of these personal assistants.

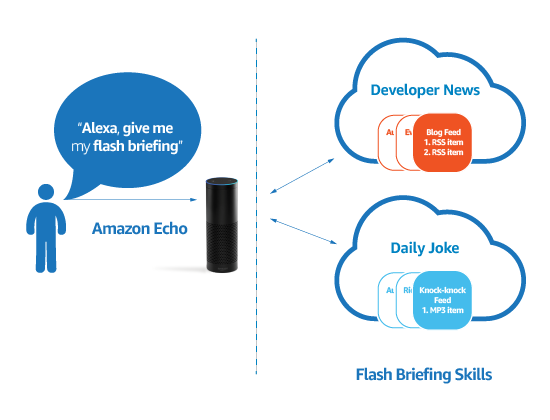

Alexa

Developed by Amazon, Alexa is a VPA made popular by the Amazon Echo and the Amazon Echo Dot. It was released in 2014 and allows you to interact with it simply by speaking to it. Alexa is capable of the following:

- Playing music

- Creating to-do lists

- Setting alarms

- Streaming podcasts

- Playing audiobooks

- Providing weather updates and other real-time information, such as the news.

Most devices that have Alexa installed to allow users to activate the functionality via a “wake word” such as “Echo” or “Alexa”. Some of the more recent developments as of May 2017 include home orders! Using Alexa, one can now order takeout food from places such as Domino‘s Pizza, Pizza Hut, and Just Eat. Starbucks also announced a private beta for placing pickup orders.

Alexa Skills

Alex is built on Skills. Skills give the platform the ability to understand verbal commands and instructions. They run in the cloud, meaning there is no installation required from an end-user perspective.

In a drive to increase developer adoption of Alexa Skills and enrich the ecosystem, Amazon has been offering up free prizes to developers as part of the Alexa Skills Challenge. In this challenge, developers are tasked with writing an Alexa Skill and can win up to $5,000 in cash!

Get a complimentary discovery call and a free ballpark estimate for your project

Trusted by 100x of startups and companies like

Concerns

Alex is popular, but it also has its share of skeptics. This technology could listen to private conversations in the home. Amazon has reassured customers than Alexa enabled devices only listen to conversations when the “wake word” has been uttered.

Despite this, the device must listen all the time to detect if the “awake word” has been uttered. Another point worth mentioning is that Amazon uses past recordings to help train Alexa and improve the user experience. These recordings can be deleted, though.

At the end of the day, people who use products like Alexa are ultimately trading privacy for convenience. Internet users‘ attitudes to privacy have relaxed in the past 5-10 years (increased adoption of social channels has driven that).

X.AI

X.AI‘s “Amy,” whilst still falling under the category of VA, is a completely different product from Amazon‘s Alexa. If it could be summed up in one sentence, it would be:

The personal assistant who schedules meetings for you

Amy was born out of the Founder's personal pain point of scheduling 1019 meetings in one year alone. As is often the case, these meetings bounced between the respective parties until a suitable appointment date and time were found.

He figured this pain point must be affecting not just him but other information workers, so he set out to build a virtual agent that leverages AI to reduce the amount of email ping pong between work colleagues when trying to schedule meetings.

Amy‘s artificial intelligence can interrogate communication and determine if humans are talking about arranging meetings. When it identifies this, Amy will then examine each person‘s diary and find non-conflicting times and present these to all parties in the email or group message.

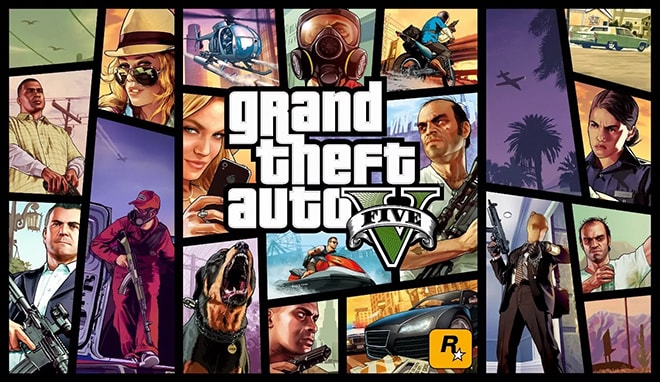

2. Video Games

Crude forms of AI in video games have been around for decades. Take the 80s arcade game Pac-Man, for example, each ghost featured unique forms of AI to try and catch the player as they made their way around the maze.

Technology has moved on from the 80s, though, and non-player-characters (or NPCs) have become so advanced that entire worlds can now be modeled, rendered, and explored.

Games such as GTA and Call of Duty feature rich digital worlds with numerous NPCs that can, and often do, behave in a human-like manner. All of this is possible due to advancements in artificial intelligence.

Stop!

Consider for a minute an AI researcher at Princeton University, Artur Filipowicz. Filipowicz has been trying to develop software for autonomous vehicles, but part of the problem is that the software must be able to recognize a stop sign. These signs can vary in appearance due to weather conditions or may simply need repair. When a car arrives at a stop sign, it must stop; failing to do so could result in a human fatality.

The image recognition algorithm, therefore, must be able to identify multiple forms of a stop sign.

Filipowicz came up with a novel solution for this problem. GTA V.

In the game GTA V, the player is immersed in a fictional city, Los Santos, which is loosely based on Los Angeles. During the production of the game, Los Angeles was extensively researched. The team organized field research trips with tour guides and architectural historians, and captured around 250,000 photographs and many hours of video footage. These photographs and footage naturally made their way into the level design.

Filipowicz was then able to alter the game in such a way that his autonomous vehicle software could navigate through the graphically rendered roads and, more importantly, identify and respond to stop signs as if it were in real life.

3. Smart Cars

Drivers, cars, and driverless lorries have been making headlines recently. Companies such as Google, Uber, Apple, Volkswagen, and Mercedes are heavily investing in self-driving automobiles powered by artificial intelligence.

In 2016, by leveraging AI, San Francisco startup Otto (owned by Uber) successfully delivered 50,000 cans of Budweiser. From a commercial perspective, integrating AI into long-haul trucking routes will yield cost savings and has the potential to save lives - AI routines don‘t suffer from fatigue.

Gartner predicts that by 2020, there will be approximately 250 million cars connected to each other via WiFi. This will allow them to communicate with each other on the roads. That‘s not too far away at the time of writing this blog.

4. Consumer Analytics and Forecasting

Predictive Shipping

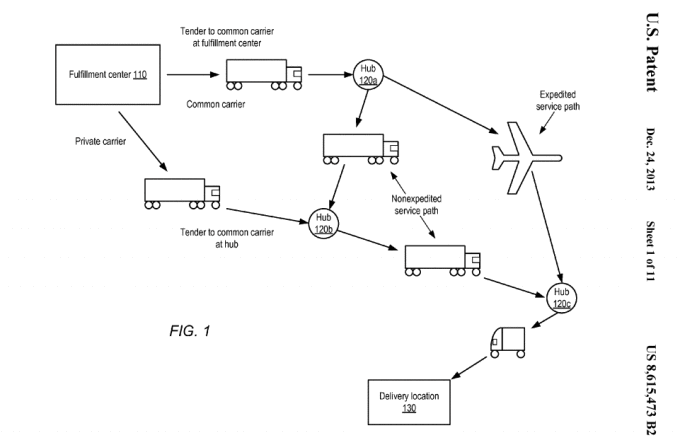

Machine learning and artificial intelligence have been utilized for years to help businesses forecast demand and dynamically set prices. Back in 2013, Amazon patented “predictive stocking” The idea behind this shipping system is to reduce delivery times by predicting what consumers will want before they have even bought it

One example pre-shipping scenario:

“a method may include packaging one or more items as a package for eventual shipment to a delivery address, selecting a destination geographical area to which to ship the package, shipping the package to the destination geographical area without completely specifying the delivery address at the time of shipment, and while the package is in transit, completely specifying the delivery address for the package.”

Source: TechCrunch

Dynamic Pricing

Estimating the price-to-sales ratio (or price elasticity can be difficult for retailers; artificial intelligence makes price optimization easier, however. It does this by correlating pricing trends with sales trends and can also align other variables, such as stock levels. Data Scientist Mohammad Islam wrote an article on this subject,t which explains this concept in more detail here.

5. Finance

Fraud Detection

Business rules and reputation lists have existed for decades, and many organizations today implement such things to identify fraudulent behavior. A rule contains a statement that is both readable by a human and understandable by a computer. For example, a bank may create a rule that says something like:

“If the customer is purchasing a product that costs greater than $1,500, their location is in Yemen, and they signed up less than 24 hours ago, then block the transaction.”

1,200 top developers

us since 2016

Rules like this are static; over time, they can be gamed by adopting a brute-forcing approach. Criminals can try different combinations of location, monetary value, and so on.

Artificial intelligence is changing this, though. By implementing supervised machine learning or SML, the machine can learn from historical datasets that contain fraudulent transactions. The machine can then identify specific patterns that represent a typical fraudulent transaction, whether it be the location, quantity, or type of product.

Credit Decisions

When applying for credit, whether it be a loan or a credit card, banks must determine whether each customer is creditworthy. Other variables are calculated, such as the credit limit, interest rate, and maximum amount the customer can obtain. Today‘s consumer expects near-instant decisions, and AI and machine learning are helping drive this.

To help banks make more informed credit decisions and determine the risk of lending to a customer, FICO is using machine learning. Researchers at MIT also found that by using machine learning, banks could reduce the number of delinquent customers by up to 25%.

6. Chatbots

Historically, chatbots offered rudimentary answers to simplistic questions, most of which were achieved by identifying specific keywords and returning a canned response. This was often frustrating for users, but artificial intelligence is transforming this field.

Advancements in Natural Language Processing and machine learning allow chatbots to understand the semantic orientation of each word in a sentence and derive true meaning. Doing this allows the chatbot to create some context of what a customer is talking about and ask relevant questions or provide solutions to customer queries.

Bank of America

One of the largest U.S banks is using a voice and text-enabled chatbot called Erica. Erica can send customers notifications or help customers make better financial decisions.

JPMorgan Chase

Launched a bot called COIN, which allows the bank to analyze complex legal contracts faster and more efficiently than a human ever can.

COIN can also undertake the following tasks:

- Parse emails for employees,

- Grant access to software systems

- Reset passwords.

To date, this has saved the bank 360,000 hours in manpower!

7. Social Networking

Facebook

With almost 2 billion users on the platform, Facebook owns one of the largest datasets on the planet. Its users share vast quantities of content, whether it be in text, image, or video format.

Consider the uploading of a photograph, Facebook will automatically highlight faces and suggest friends to tag that exist within the user‘s social graph.

But how can Facebook do this in near real-time? You‘ve guessed it, AI.

By leveraging facial recognition software and neural networks, Facebook can identify with reasonable accuracy who each person is. Facebook acquired an Israeli facial recognition tech firm, Face.com, in 2012 for $55-60 million, which has helped drive this. Facebook has also been investing in this technology internally.

Snapchat

In 2015, Snapchat introduced “facial filters”. These tracks facial movements and allow users to add digital masks that overlay their faces when moved. It uses AI technology, which was originally developed by a Ukrainian company called Looksery, which has patents on using machine learning to track movements in video.

8. Real Estate

Elements of artificial intelligence have been used in real estate for some time now. Property listing platforms can match buyers to new properties within minutes of being shared online. It goes further than simply matching keywords.

Roof.AI is set to change real estate by integrating artificial intelligence into the heart of all real estate activities. Some of the features include, but are not limited to:

- task automation

- lead generation

- Facebook Messenger integration

“Roof Ai is an AI-powered messaging service that enables smart conversations between real estate businesses and their customers.

The messaging service is backed by a proprietary CRM. The CRM is used by the real estate teams to manage the requests and assist the chatbot in case human intervention is needed. It‘s also an analytics tool that helps them monitor everything that is happening on our messaging channels.

Most real estate websites struggle to achieve a 2% conversion rate. And the main reason for that is the lack of engagement with the visitors on these sites. Roof Ai helps increase conversion by a factor of 8.”

Broker vs Bot

Inman, a real estate publication, launched a challenge affectionately titled “Broker vs Bot”. The challenge, which was conducted in Denver, asked a local real estate journalist to play the role of “buyer” and select three homes that he liked from local real estate listings.

Then, on three separate dates, Inman asked three separate real estate brokers to compete against a bot, “Find More Genius,” to recommend homes like one of the homes selected by the buyer. The “buyer” was then asked which one of the recommended homes he preferred. On all three dates, Find More Genius's choices were selected.

Does this mean that AI will replace agents?

We can‘t say for sure, but one thing is certain: businesses that adopt emerging technologies like AI will stay one step ahead of the curve.

9. Drones

You‘re probably familiar with pilotless drones; they‘ve been used by the military for years now. In recent years, drones have also made the switch from the defense military world to the civilian world.

Let‘s explore some examples of how drones are using artificial intelligence.

Life Saving

An engineer at the Technical University of Delft, one of the world‘s leading drone research hubs in the Netherlands, started to investigate whether drones could reach a heart attack patient faster than an ambulance.

By working with ambulance services in Amsterdam, Alec Momont established that the typical response time for a cardiac arrest call is approximately 10 minutes

Momont went on to build a drone prototype that ships with a DIY defibrillator and is aiming to get there in six minutes. Momont‘s vision is for drones to be part of a wider emergency services response team, and that someone witnessing a heart attack could call 112 (the equivalent of 911 in the US or 999 in the UK) and the call handler would dispatch a drone. Using a two-way video connected to the drone, a medic could talk the witness through the necessary steps of using the defibrillator.

One can see the obvious advantage of having such technology in rural areas or difficult-to-reach locations

Hover Camera

Zero Zero Robotics‘ “Lily Camera,” which pitches itself as a “throw and shoot” camera, hovers in the air and is powered by bespoke artificial intelligence, which has been coined “Embedded AI”.

The firm developed proprietary technology that fuses a suite of state-of-the-art AI with a PCB the size of two US quarters!

Weighing only 238 grams, the self-flying camera can be carried around. It‘s like having your own self-flying personal photographer. Once in the air, the drone automatically finds and follows its owner, while recording their everyday life from an entirely new angle. It‘s all possible thanks to advanced artificial intelligence facial recognition algorithms.

Logistics

In December 2016, a British farmer, Richard Barnes, received an order placed on Amazon for a bag of popcorn and an Amazon Fire TV Stick.

What was different about this delivery?

It only took 13 minutes for the goods to be delivered from the point of order, and was fulfilled by using an autonomous drone!

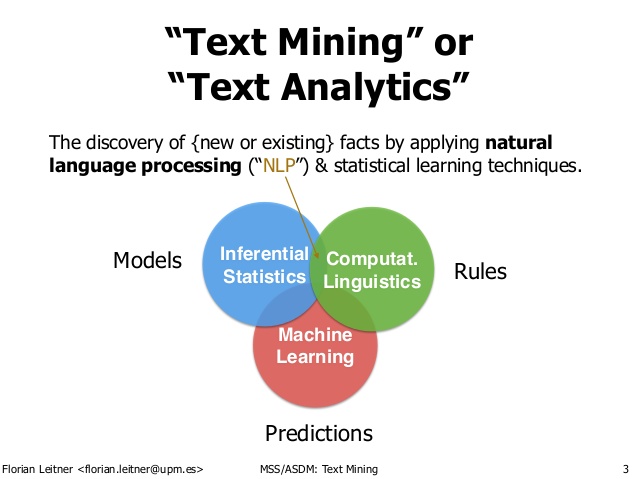

10. Text Analytics and NLP

Text Analytics and NLP are intertwined; without Natural Language Processing (NLP), the machine can‘t determine the semantic orientation of the words (make sense of the order of the words and what they mean).

NLP allows humans to communicate with the machine in natural language. Let‘s explore some examples of text analytics and NLP.

Customer Reviews and Sentiment Analysis

Consumers often leave comments or reviews on specific products or services that they purchase. Quite often, the volume of user-generated data being created is vast and simply cannot be reviewed by a human at scale. This sort of text is also unstructured, which only adds to the problem. Text analytics, NLP, and AI are ideal for these sorts of tasks, however.

By applying techniques such as sentiment analysis and POS Tagging (Part of Speech), businesses can find out how consumers feel about their product, brand, or service.

In recent years, we have seen the democratization of sentiment analysis in that it‘s now being offered as a service. Some of the companies that offer this sort of functionality include, but aren‘t limited to:

- Microsoft

- IBM

- Monkey Learn

- Social Opinion

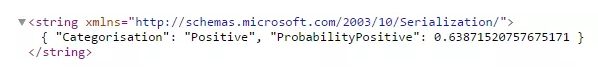

They offer REST APIs that integrate easily with your existing software applications. For example, using the following publicly available Sentiment Analysis REST API from UK start-up Social Opinion, we pass in the text, “this phone is awesome”:

http://api.socialopinion.co.uk/api/sentiment/?text=phone%25awesome&token=00000

The REST API then returns the following response:

In the response, we can see the text has been identified as expressing positive emotion with a 64% probability of that being true.

AdTech

This is probably one of the more mature forms of artificial intelligence and machine learning in operation online. Have you ever looked at products on Amazon, then moments later noticed similar products being displayed in your Facebook or Twitter feed?

By tracking what you “Like” and what you‘ve viewed and the comments you post and share, machine learning can, with relative accuracy, place marketing creatives in your news feed on social channels, thereby improving conversion rates for business.

AdTech is such a lucrative vertical that companies such as Twitter have launched developer initiatives like #Promote to encourage the development of AI-based software to drive online sales.

Planning to Create an AI Solution?

In this post, we‘ve covered 10 examples of artificial intelligence in the real world, ranging from virtual personal assistants to smart cars, flying drones, and more.

Businesses will continue to innovate and build innovative solutions to complex problems and developments in artificial intelligence, so no signs of slowing down.

If you are planning to create an AI solution and need help, contact DevTeam.Space via this link, and one of our account managers will get back to you.

Related to real-life examples of AI articles

- 20 Best AI Apps In 2026

- Top 10 Machine Learning Algorithms Examples

- 10 Best AI Tools in 2026

- 10 AI Development Companies I Trust Based on Real Project Results

- DevTeam.Space Blog

- How to Create an AI Solution?

- How to Use Artificial Intelligence to Improve Quality Control?

- AI Software Development Life Cycle: 11 Steps

- DevTeam.Space Blog

- 7 Best AI Software Development Tools 2026

- Why Choose DevTeam.Space for Your Software Development Projects?

- What are the Benefits, Uses, and Challenges of DApps?

- How to Use ChatGPT for E-learning Platforms

- How AI Is Transforming the Product Development Process?

- 10 AI Development Companies That Earned My Recommendation in 2026

- 4 Ways AI in Risk Management Will Change Everything

- ChatGPT Implementation Guide: 6 Steps

- How to Implement AI Enterprise Solutions: 7 Steps

- How To Hire Education App Developers?

- How Can an Enterprise Use Predictive Maintenance AI?

- 10 Best AI Tools in 2026

- What is a Dev Team? Roles, Structure, and Agile Practices

- 8 Best AI for Coding Tools in 2026 I Reviewed Hands-On

- What Are the 9 Ways to Use AI SaaS to 10x Revenue?

- Software Development Life Cycle(SDLC): 7 Phases

Frequently Asked Questions

AI has a wide variety of applications, including helping insurance companies to reduce fraud, helping hospitals automate drug reordering, and in finance to improve stock trading.

Artificial intelligence is the ability of a computer program to learn. More complex AI systems will have the ability to understand emotions, teach themselves new skills, and eventually be self-aware.

Current technologies are not sophisticated enough to even have a reasonable chance of being self-aware. In the future, when the systems become extremely complex and advanced, this is set to be a huge debate.