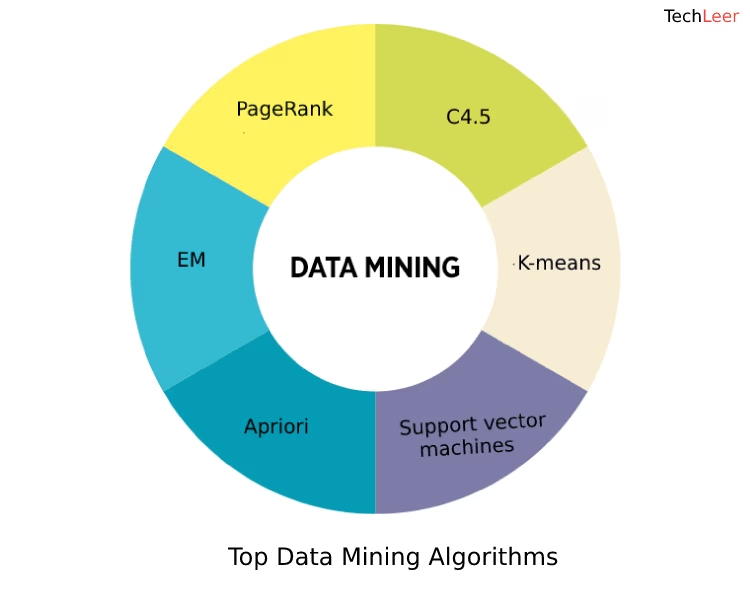

What are the Top 10 Data Mining Algorithms?

Are you interested in learning more about data mining algorithms? Or maybe you're wondering which algorithm is the best for data mining? You've come to the right place! This blog discusses the top 10 data mining algorithms.

In this article

To understand these data mining algorithms better, it is necessary that you go through some important terms first. Here are some of the main ones.

Terminology

- Classifier - A program that sorts data entries into different classes. E.g., a classification algorithm might sort cars into classes such as sedans, SUVs, hatchbacks, etc.

- Supervised learning - Algorithms that need a 'training' set of data to learn from. E.g., a set of patients and symptoms that have already been marked 'sick' or 'well' by a doctor.

- Unsupervised learning - Algorithms that don't need any marked or labeled training data set to work properly.

- Outliers - Data points that are out of the usual range. E.g., in a test with most scores between 40 and 45, a score of 100 would be an outlier.

- Noisy data - Data with lots of outliers

Top 10 Data Mining Algorithms

1. C4.5

The first on this list is C4.5. It is a classifier, meaning it takes in data and attempts to guess which class it belongs to.

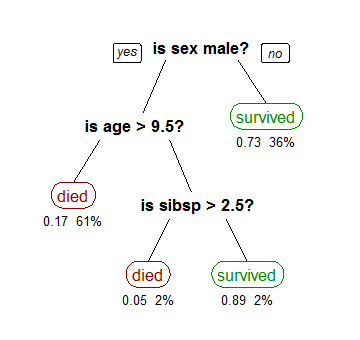

C4.5 is also a supervised learning algorithm and needs training data. Data scientists run C4.5 on the training data to build a decision tree. This can then be used to classify new information.

Let's take a look at an example. Say we had a sample of data showing who survived the sinking of the Titanic. We also know their sex, age, and the number of siblings they had on board. Running C4.5 might generate a decision tree like the one below.

Get a complimentary discovery call and a free ballpark estimate for your project

Trusted by 100x of startups and companies like

Now we can use this decision tree on new data to predict whether a passenger survived or not.

The main problem with C4.5 is overfitting when using noisy data. This means the decision tree it generates pays too much attention to outliers and will make mistakes if there are too many in the dataset.

2. K-means

K-means is a very different type of data mining algorithm from C4.5. It is an unsupervised learning algorithm, meaning it doesn't need labeled training data, and works even if your data isn't already marked or classified.

Rather than classifying data, the goal of K-means is to group data points together based on how similar they are. These groups are called "Clusters". A very simple example might be to group a set of people together by age and blood pressure. The 'K' simply tells us there can be any number of groups.

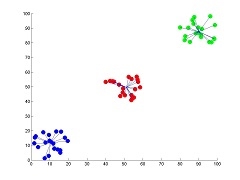

It's a popular data mining algorithm because it's simple to implement and works quite well for data analysis. Here's an example of a data set that has been grouped using this technique.

K-means works by finding points called "centroids", then assigns each point in the data to a cluster based on its closest centroid. As you can guess, the trick here is to find the optimum number and position of centroids to group the data properly.

This is done in a very clever way. Here's a basic version of the algorithm:

- Choose the number K of centroids you want.

- Randomly select positions for the centroids in multidimensional space.

- Assign each data point to its closest centroid, creating K clusters.

- Readjust the positions of the centroids to be the average (or mean) position of these new clusters (this is why it's called K-means).

- Repeat steps 3 and 4 until the centroids no longer move!

Once the centroids stop moving, the algorithm has finished, and the data has been clustered. For best results, K-means is usually run multiple times with different random starting points. The one with the tightest clusters is probably the best result.

K-medoids is a similar version of this algorithm, but it is more resilient to outliers.

3. Support Vector Machines (SVM)

SVM is another supervised classifier algorithm like C4.5. The difference is that it classifies data into two groups. This can be thought of as a line separating data points on a graph.

The thing that makes the Support Vector Machine algorithm really cool is that it can find complex curved lines separating points more accurately than a straight line. It does this with a clever trick using dimensions.

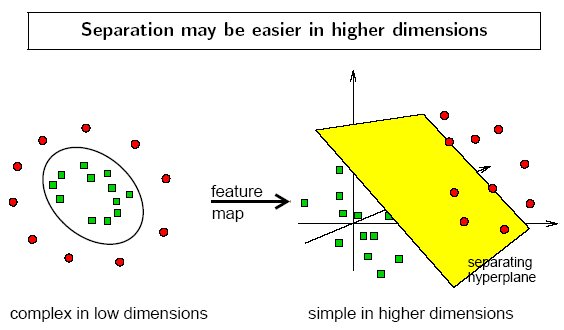

SVM takes a dataset and projects the points into a higher dimension. By doing this, it can more easily separate the points into two groups. Here's a great example.

The two-dimensional data on the left can't be separated with a straight line. To fix this, SVM projects points into the 3-dimensional space shown on the right. Now, the data can easily be split into two classes with a plane that SVM can find automatically.

Of course, this won't always work, so you'll need to experiment with different classifier algorithms to see which one is best for a particular problem.

4. Apriori

Apriori is an algorithm that learns which items in data are commonly associated with each other. This can be very useful for grouping similar items together in tables.

For example, your company might have a database of customers and transactions. By running the Apriori algorithm on this data, you might find that coffee beans are commonly bought together with coffee machines.

You could use this information to display product recommendations at the right time to increase sales.

The algorithm itself is quite complicated. It is usually considered unsupervised learning, as it can be used to explore sets of unlabelled data. But it can be modified to give classifications, too.

1,200 top developers

us since 2016

5. Expectation-Maximization (EM)

EM is a clustering algorithm that uses statistical models to group data.

You might know that the results of test scores usually follow a bell curve. That is, most of the test results will fall somewhere in the middle, with a few getting very high scores and a few getting very low scores.

Bell curves are used all the time in statistics and show up in all kinds of data. On paper, it looks something like this:

EM attempts to guess the curve that represents a certain data set. For example, given a sample set of test scores, guess the bell curve that represents the whole set. Once it's got this curve, it can be used to group new data into 'grades'.

This works quite well in practice and has many different applications to K-means.

6. PageRank

PageRank is one of my favorite algorithms. It actually affects your life every day, and mine. You probably know it better as the main algorithm that powers Google searches.

Back before PageRank was invented by the guys at Google, internet searches were terrible. They basically counted the number of times a keyword appeared on a web page to determine how relevant it was to that keyword. This leads to lots of spam and bad results. So how do you fix that?

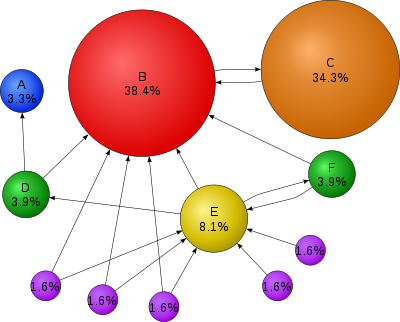

You can think of PageRank as a kind of voting algorithm. It counts the number of times a web page is linked to by other pages. The more links pointing to a page, the more important that web page is considered. Also, if a web page is found to be important, its links will also be more important and carry more weight.

PageRank can be used for more than just ranking web pages. It can be extended to any kind of graph where things are linked together.

Here's an example of a linked graph that has been run through PageRank. The sizes and percentages show how 'important' the nodes were found to be based on links to them.

7. AdaBoost

AdaBoost is a data mining algorithm that builds a good classifier out of lots of bad classifiers.

In machine learning, a bad learner is a classifier that doesn't work very well, barely better than random chance. However, if you have a few of them, you might be able to combine them in a way that ends up being pretty good!

AdaBoost does this by using a training set of data, so it is supervised learning. It iterates through the training data on each bad learner, working out which is the best at each step.

Each new round, the misclassified points are more important. Eventually, a more complex decision tree is built from the simpler parts. This new tree should be better at classifying new data than any of the original learning algorithms.

The cool thing about AdaBoost is that it can be used with other algorithms. The 'bad learner' building blocks can be the simple decision trees built by other algorithms.

8. K-Nearest Neighbors (KNN)

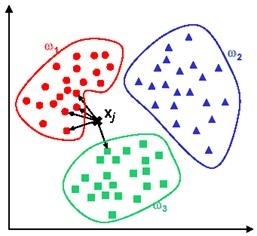

K-nearest neighbors is one of the simpler data mining algorithms. Like most of the list, it is also a classifier (yes, classification algorithms are very important in data mining). However, it does things a little differently from the ones I've already described.

K-Means, AdaBoost, and SVM are all eager learners. They take training data and build a classifier that can then be used on the latest data.

KNN is a lazy learner. It doesn't actually do anything until it is given new, unlabelled data. When this happens, it finds the 'k' nearest neighbors to the new data point (i.e., the ones that are most similar) and assigns a class similar to those. Here's an example:

The new point X is given as new input. Of its five closest neighbors, four are in the red class, and one is green. Based on this, X is classified as red, as most of its neighbors are red. Pretty simple!

9. Naive Bayes

This actually refers to a group of algorithms that share a common — naive — assumption.

That assumption is that each feature in a set of data is completely uncorrelated with all other features in the set.

For example, say we have data about hospital patients. The features of the data include zip code, height, and age. Those three things probably aren't correlated — your zip code doesn't determine your height or age very much.

Here, the Naive Bayes assumption is fine.

Using this assumption, some very simple math can give us a classifier that can predict the class of new data.

But this assumption isn't always true. Let's say we extend our hospital patient data set to include things like blood pressure, cholesterol, and weight. These probably are correlated, so will the algorithm still work?

The answer is usually yes. Naive Bayes classifiers work surprisingly well, even on data with correlated features.

10. CART

The last data mining algorithm on the list is CART, or Classification and Regression Trees. It's an algorithm used to build decision trees, just like many of the other algorithms we've discussed.

CART can be thought of as a more statistically grounded version of C4.5, and can lead to more robust decision trees. One of its defining features is that it constructs binary trees, meaning each internal node has exactly two edges.

The reason to use this algorithm over C4.5 is that it is less susceptible to outliers. That means it will work better on a noisy data set where not all data points are correctly classified.

Thinking of Adopting Data Mining Methods in Your Business?

Data analysis of available business data helps stakeholders and product managers to make informed business decisions. Data mining algorithms are an important part of this statistical analysis process. Mostly, the implementation of the data mining process in business processes requires exceptional domain knowledge, data science skills, and experience in using data mining.

For this reason, it is better if you partner with skilled data scientists for your data mining project. DevTeam.Space can help you here. We have a community of expert software developers who are experienced in developing market-competitive products using cutting-edge technologies like artificial intelligence, machine learning, etc.

You can get in touch by describing your initial data mining requirements via this quick form. One of our technical managers will get back to you to further answer your questions and to link you with software engineers with the relevant skillset.

Related to data mining algorithms articles

- Top 10 Machine Learning Algorithms Examples

- 10 Popular Machine Learning Tools

- How to Develop a Machine Learning Algorithm?

- 7 Best AI Software Development Tools 2026

- 5 Ways to Implement Predictive Analytics in Business

- SaaS Development Guide for Founders: 12 Steps

- DevTeam.Space Blog

- 5 Use Cases of Machine Learning in App Development

- 10 Best AI Development Tools: For Developers

- 10 AI Development Companies That Earned My Recommendation in 2026

Frequently Asked Questions on Data Mining Algorithms

An example of data mining can be seen in the social media platform Facebook, which mines people's private data and sells the information to advertisers.

Data mining can be applied to any form of data. Examples include pictorial data mining, message mining, online behavior mining, and viewing habit mining.

Influential data mining algorithms like link analysis algorithms, genetic algorithms, etc., are used by all large companies, websites, and apps to help make their services more accurate while boosting revenue from advertising, etc.