How to Scale Your Jakarta EE Application

Interested in how to scale your Jakarta EE application? You've come to the right place. Here, I am going to provide a brief overview of Jakarta EE and explain how to scale Jakarta EE applications.

In this article

- Why Jakarta EE?

- Types of Scaling: Which One to Choose?

- How to Scale Out a Jakarta EE Application

- Frequently Asked Questions on Scaling Jakarta EE Applications

Why Jakarta EE?

Jakarta EE, previously known as Java EE, is the enterprise edition of the Java Programming Language Platform. It is built on the Java SE platform and is a great runtime environment for large-scale app development. Jakarta EE positions itself as an open-source framework for developing cloud native Java applications.

It is much loved because it offers important features like scalability, as well as being an excellent platform to create reliable enterprise applications.

To learn more about the platform, read this overview.

A Jakarta EE application has many features to make development easier, like wizards to create applications that use context and dependency injection, Java annotations instead of XML deployment descriptor, an authentication service, etc.

Jakarta EE is able to achieve simplicity by standardizing and automating many aspects of application development through APIs.

For example, it comes with Jakarta Transactions API to manage transaction life cycle and persistence of objects, Jakarta Messaging API, Jakarta Data API for data access, Jakarta Criteria API for queries, Jakarta Persistence API for persistence in web applications, etc.

Get a complimentary discovery call and a free ballpark estimate for your project

Trusted by 100x of startups and companies like

Jakarta EE 10 specifications include Jakarta Enterprise Beans architecture. It allows Jakarta EE developers to develop sessions for entities without creating a separate module for business logic.

In the latest Jakarta EE 11, some of the enhancements in Jakarta EE technologies include:

- Support for Java SE Records as classes that can be embedded

- Optional persistence.xml configuration

- New Jakarta NoSQL Specification

Because of this standardization, it becomes easier to follow good development practices, which make scalable apps more reliable.

Normally, software developers use Eclipse or Netbeans IDE, the Java development kit, and the open source Glassfish server for creating Jakarta EE applications. NetBeans has built-in support for the implementation of Jakarta Faces and Jakarta Server Pages applications, etc.

Read this tutorial for getting started with Jakarta EE applications.

With more and more enterprises using Jakarta EE as a platform to design and run increasingly complex applications, scalability has become an extremely important issue for Java developers.

What do we mean by scalability?

Scaling, while considered synonymous with performance, actually refers to the ability of a system to expand the number of available resources to accommodate a surge in demand.

To compare the two terms, performance is the measure of how fast a system can respond to the requests it receives, while scalability refers to the number of requests that a system can handle simultaneously.

If, for example, a system has a very good performance level but cannot handle more than, say, 100 requests at a time, then it would limit the usefulness of the application that is running on it.

Just imagine the effect on the usability of an app like Uber, were it not to be able to scale excessively.

“After all, if Uber doesn‘t work, people get stranded, and that was really, really interesting to me.” - Scalable Systems & Scalable Careers: A Chat with Uber‘s Sumbry

With the potential of 3 billion people to access applications and websites, these programs must be able to scale effectively.

Scaling any application, no matter how simple or complex it might be, can be a nightmare. When not done properly, it can lead to inadequate fixes and patches that actually have a negative impact on system performance.

Scaling a Jakarta EE application should be a well-planned process that takes into consideration a myriad of different issues. To give you an idea of what scaling involves, first, I will take a more in-depth look at the different types of scaling.

Types of Scaling: Which One to Choose?

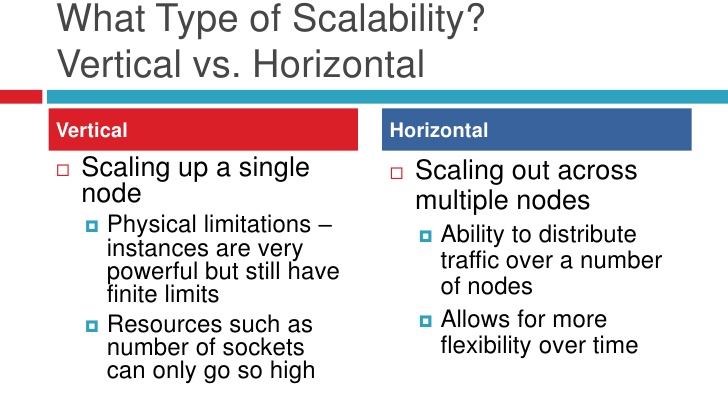

There are two types of scaling that can be incorporated into your application.

Scaling Up

Scaling vertically (also called scaling up) refers to adding physical resources to the network. This may be in the form of increasing the number of CPUs, memory, or resource allocation.

This type of scaling can be used to enhance virtualization on a Web server and to provide more resources to virtual Operating Systems installed on a virtual machine.

Although scaling up is a good choice when the number of users is still relatively small, for larger applications, scaling up proves to be a lot more expensive.

The main resources that can be added to a server to scale up are memory and processing power, i.e., CPU.

For those companies looking to add scalability to small-scale applications, I will briefly go into detail on the advantages and disadvantages of adding both these resources to scale up your application.

Adding Memory

Having sufficient memory is of utmost importance when scaling any network.

1,200 top developers

us since 2016

It is particularly important for IO and database-intensive applications, where, instead of querying the database again and again, a time-intensive task, vital information can just be cached in the memory so it is readily available upon request.

As a result, scaling up memory has a huge effect on system performance.

Java incorporates Garbage Collection, which automatically recycles dynamically allocated memory from objects no longer in use. This frees up memory that applications can then use to increase their performance.

However, while the garbage collector works, most of the threads are put on hold. This can lead to critical delays in real-time applications like IoT.

In scenarios like this, when there needs to be bigger intervals between consecutive garbage collections, a larger memory helps the system by delaying the need for garbage collection to free up the memory in the first place.

Adding CPUs

Most Web applications are designed on the basis of a simple request and response server architecture. The user sends a request, and data is fetched from the database and relayed to the user in a specific format.

Generally speaking, these applications are not CPU-centric, meaning they don't need bigger, more powerful CPUs in order to allow for scalability.

Scaling up CPUs for such applications leads to a waste of resources because the CPU only utilizes a small percentage of its total processing power.

Scaling up also has a slight disadvantage when it comes to system availability. Availability refers to the amount of time the application is up and running, otherwise known as uptime. If all the resources are spent on a single server, then any problems with the server will lead to your application going offline to some, if not all, users.

If there is no backup system in place, then this can lead to losses, both monetary and in the form of lost users.

Scaling up is mostly used in specific scenarios where it is deemed to be the best solution.

Scaling Out

Scaling horizontally (also called scaling out) is the process of adding more nodes to the system.

One example of this strategy can be seen with major tech companies like Facebook and Google. These companies add more and more servers around the globe to cater to an ever-increasing number of user requests.

Such examples show the enormous value of calling out. Without such an approach, companies would be forced to purchase massive supercomputers, which would be impractical due to the massive cost.

Advantages of scaling out

Scaling out gives your Jakarta EE application a better level of system availability than scaling up does.

If there are multiple servers catering to requests concurrently, then in case any server crashes, there will be other servers that can bear the workload while the crashed server is fixed.

This helps keep users happy because, while running slower, the application never goes offline.

Scaling out also has a number of strategic advantages when it comes to application performance. With Facebook, for example, the ability to store data in local data centers helps keep performance levels high. And this is no small thing.

As this article highlights, “At Facebook, we have unique storage scalability challenges when it comes to our data warehouse. Our warehouse stores upwards of 300 PB of Hive data, with an incoming daily rate of about 600 TB. In the last year, the warehouse has seen a 3x growth in the amount of data stored.”

How to Scale Out a Jakarta EE Application

Scaling out is usually done with the help of a load balancer. It receives all the incoming requests and then routes them to different servers based on availability.

This makes sure that no single server becomes the point of all the traffic, and so the workload is distributed uniformly.

If one server in the cluster fails, the load balancer routes the incoming requests to other servers in the cluster. Load balancers also make scaling out your Jakarta EE application much easier.

Should you want to add another server to the cluster, then the load balancer can start directing traffic to the server right away. This saves valuable time that is otherwise used in complicated configurations of the servers.

Pitfalls of using a Load Balancer

Some applications use states to store session information for the client. These states, like HTTP session objects, store the user information, like shopping cart information, and must be present on the server for the requests to be executed.

With a simple load-balanced architecture, the load balancer can redirect subsequent requests to different servers based on availability. The new server will not have the session states, and the user request cannot be processed.

Every time a user accesses the application, they may be directed to a different server. This means that the user will have to submit all their previous data again to the new server, something which slows network performance.

To counter this problem, Sticky Sessions can be used. Sticky sessions are implemented on the load balancer to make sure that subsequent requests from a client go to the same server every time. This is also known as server-affinity.

This helps resolve the aforementioned problem, but does, however, give rise to another problem.

If the server that created the session for the client crashes, then the next request will be forwarded to another server in the cluster that will not have the state information. This eventuality brings us back to square one.

To counter this problem, we move outwards from a single server and focus on creating integrated server networks.

If all the data about the states were stored in a single database that was stored in a way that made it accessible to multiple server systems.

In case of any server going offline, the state data would still be accessible to the next server that responds to requests by the client.

However, the drawback of such a system is that database access can be a time-intensive process that can decrease the performance of the system.

Solutions like Oracle Coherence provide an in-memory distributed solution for clustered application servers. This provides a fast messaging service between servers that can exchange critical data like the user states.

For now, this is the best solution for having the user states present in all the servers simultaneously. There is no need for expensive database operations, and the data is reliably transferred to all the Servers of an application.

Final Thoughts on How to Scale a Jakarta EE Application

The Jakarta platform, enterprise edition, is unique in its architecture, and so are the apps designed and built on it.

Even though they may use the same standard libraries and APIs, two separate applications with the same functionality can be designed with different priorities in mind.

This creates problems when a single strategy is used to scale two different Jakarta EE applications.

Scaling out by adding more servers is generally a good way to scale the application to cope with a bigger load. But as I have discussed, the way to do this also depends on the type of application that needs to be scaled.

Understanding the architecture of your application and the different types of scaling, including the strengths and weaknesses of each approach, will ensure that you scale your Jakarta EE application successfully.

If you are looking to outsource software developers experienced in scaling Jakarta EE applications, reach out to DevTeam.Space via this form.

One of your expert technical managers will get back to you to discuss your application's scaling requirements in detail and to connect you with suitable software engineers.

Related to scaling Jakarta EE applications articles

- How to Improve Java Application Performance

- How to Scale a Software Product?

- Frequently Asked Questions

- SaaS Development Guide for Founders: 12 Steps

- Expert Enterprise Application Development Services

- How to Build Scalable Web Apps: 7-Step Guide

- Expert Web Development Services

- How to Build a SaaS Platform: 7 Steps

- Build a Great Software Product with Our AI-Powered Agile Process

Frequently Asked Questions on Scaling Jakarta EE Applications

Provided that you have the relevant expertise, then it is relatively straightforward to scale an application using the suitable scaling approach.

You will need to do a review of your application to ensure that there will be no issues scaling it. Generally speaking, you will need to focus more on upgrading your infrastructure than upgrading your app code.

Java SE is a normal Java specification with class libraries, virtual machines, deployment coding, etc. Jakarta EE (formerly Java EE) has more structure with a separate client, business, and enterprise layers. Applications requiring large scalability and distributed computing use Jakarta EE.